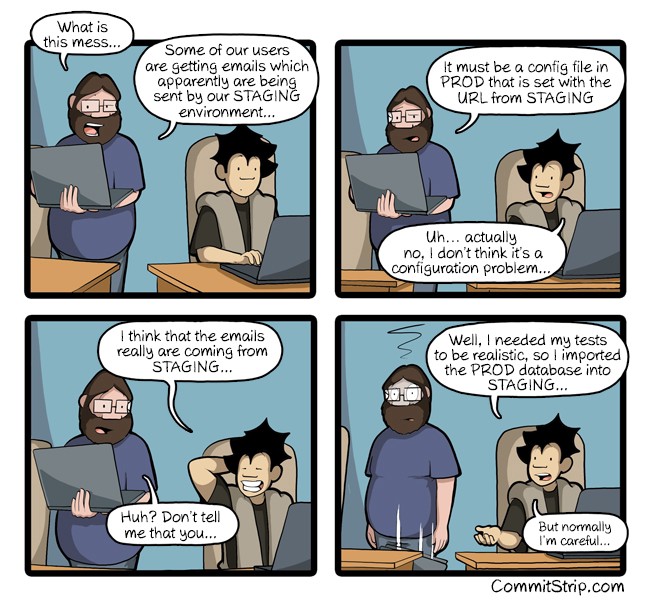

In the summer of 2021, HBO accidentally sent an email to millions of past and current subscribers with the body “This message is used by integration tests only”.

The internet was gracious about the mistake an intern made (context), but it was an interesting reminder of the challenges of managing environments.

For continuous deployment, teams often create environments for development, staging and production. The development environment optimizes for rapid iteration. Staging is where you discover the known-unknowns. Production optimizes for reliability and security.

Two philosophies : Per Environment Config vs Global Config

There are different philosophies for how to manage multiple environments. Some teams attempt to create hard boundaries between these environments. They create separate, distinct config for each environment. We’ll call this “Per Environment Config”.

Others want all environments to be similar to Production. They only allow differences essential to the environment’s distinct purpose. We’ll call this “Global Config”.

While Statsig can support both approaches, it is optimized for the latter. We believe this is a better way to manage feature gates and experiments across environments. It optimizes for engineering efficiency : reducing errors and making troubleshooting faster.

Advantages

Some advantages of the Global Config approach (with environment specific exceptions) include…

Can look at a feature gate in one place and see all exceptions by environment, without having to navigate across different screens for different environments and diff them in your head.

Feature Gates and Experiments are typically configured with default/fallback values. The absence of configuration for an environment can easily be missed because the app seems to work with the defaults.

An extra bonus is the ability to test feature gates with real data when you’re troubleshooting why a user passed/or failed a gate. Pass in the JSON with the user attributes your app is sending, and we’ll look across all environments and pinpoint the specific rule that is applying to the user and causing the pass/fail result!

Wrinkles (and mitigation)

A potential issue with the global config approach is when change management approvals are required. Statsig allows teams to require review for changes before they’re committed.

This is useful in production where stability is paramount, but can slow down iteration in development and staging environments.

The solution for this is to create rules explicitly for the Dev and Staging environments (like in the global config example above). Dev and Staging environment rules do not require approval to change— allowing engineers to iterate rapidly. Changes to the “All Rules” section can impact Production and will continue to need change approval.

Note that you aren’t required to create an environment specific section. You can choose to use Environment Tier as a condition in regular rules. Adding explicit sections for Dev and Staging rules makes the distinction easier to see and benefits from removing the need for approvals (if enabled).

Reach out!

See an issue not addressed by the approach we’re suggesting? Reach out — I’d love to hear from you and learn more!