Modern tech companies are evolving the way that they build their customer data infrastructure at an incredibly fast rate.

There are two key factors influencing this rapid modernization:

Businesses want to make faster and better decisions based on accurate and fresh information.

Businesses want to leverage rapidly evolving and automated data intelligence inside their customer-facing applications.

A modernized customer data stack addresses these needs by ensuring that data is portable and consistent, but each business and each use case may have different needs. This is not one size fits all. In this blog, we’ll discuss a typical evolution from “walk” to “jog” and then to “run” and some things to consider along the way.

“Walk” Approach to Customer Data Infrastructure

In the early stages of a digital business, product market fit and customer acquisition come first, and customer data infrastructure may be an after thought. As the business grows, and your use cases mature, businesses that haven’t prioritized customer data infrastructure end up with something like this:

Each of the digital properties shown above needs a baseline set of user data to function, and ultimately each of these digital properties generate their own unique set of valuable user data over time. (i.e. User opened email, user clicked email, user submitted a help desk ticket, user completed a payment, user entered an experiment, etc.)

Generally, there are two types of digital properties:

Owned properties:

Websites, mobile applications and server side applications.

If a business is generating calculated metrics, model outputs or cohorts in a warehouse, that ultimately becomes a data producer as well.

Cloud properties:

Help desks, payment systems, marketing tools, A/B testing tools, ad platforms, CRMs, etc.

Since each of these digital properties is both a producer and consumer of data, the “walk” approach to customer data infrastructure attempts to maintain unique communications between each of the digital properties. Customer data may be collected directly using SDKs or a tag manager, and may be centralized using ETL. (i.e. Did a user convert? We must update our CRM, turn off advertising and inform our A/B testing platform! Does a user have active help desk tickets and a high likelihood to churn score? We should stop marketing to them, inform our A/B testing platform and let the success team know! Oh yes, and everything must also go to the warehouse!)

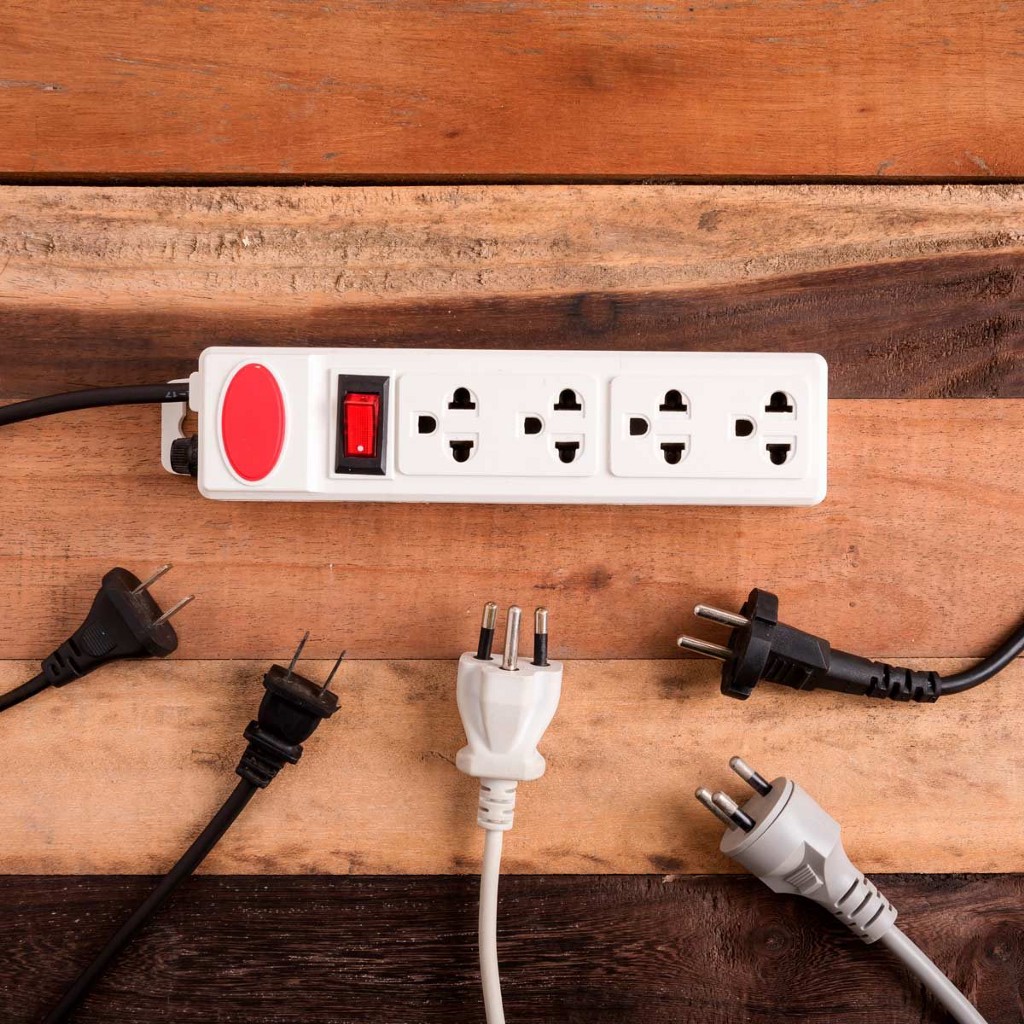

As the data stack grows, and customer engagement cycles increase in complexity, the less manageable the “walk” style of customer data infrastructure becomes. I liken it to a messy server rack, which was set up by the “last IT guy.”

Ultimately, businesses begin to outgrow the “walk” approach to customer data infrastructure for the following three reasons:

Too many custom pipelines, SDKs and transformations decrease the fidelity and manageability of data over time.

It’s impossible to enforce schema standardization across channels without introducing latency (Everyone loves a bolt on MDM… right?).

It’s impossible to resolve user identities across channels without complex user identity services, which introduce latency.

End users of internal applications and data engineers are suffering.

“Jog” Approach to Customer Data Infrastructure

The “jog” approach to customer data infrastructure solves the aforementioned problems by creating a centralized data supply chain:

When an owned digital property (Web, Mobile, Server) generates data, that data is sent to the Collection layer. The Collection layer communicates that data to the Application and Storage layers. Similarly, when a digital property in the Application layer generates data, it communicates with the Collection layer. Then, the Collection layer forwards that data on to other digital properties in the Application and Storage layers.

By creating a centralized data supply chain, you abstract away the schema enforcement and identity resolution challenges to a central point: the Collection layer. In most cases, you also reduce technical debt created by maintaining individual communications between storage, owned properties and cloud properties. My favorite analogy to describe the “jog” approach to customer data infrastructure is:

“Every time you need to plug something in and there’s no more outlet space, would you rather continually install new outlets into the wall or just buy a power strip?”

In theory, the “jog” approach to customer data infrastructure is a great way to solve this problem. For medium sized businesses it can work incredibly well. In practice, there are many variations and overlaps between the Storage layer, the Collection layer and the Application layer. As time goes on, and particularly for larger digital businesses, I speculate we will see a continued blurring of the lines between what I’ve called the Collection layer and the Storage layer. The three main reasons for this are:

By abstracting schematization to the Collection layer, businesses sacrifice some flexibility of the data model. Ultimately, this can degrade the usability and effectiveness of a subset of downstream tools in the Application layer. –As a quick clarification, there will always be tools in the Application layer that require their own unique data structure or interactivity in the client (Heat mapping, chat tools, etc.). This is a separate challenge from what’s mentioned above. The simple point here, is that the data model enforced in the Collection layer may not always align to the data model that’s expected by tools in the Application layer.

The Collection layer assumes there is no existing customer database of record. The Collection layer wants to create one from the data it ingests and in most cases, it is not cost efficient, flexible or privacy compliant for your customer database of record to live inside the Collection layer.

You cannot query the data, you cannot delete data in any meaningful capacity and you are up-charged on the raw storage cost. Ultimately businesses end up with parallel storage in the Collection layer and their own Storage layer.

Implementation and adoption of a Collection layer that enforces a schema is a wide, laborious and iterative process. You have to be committed across business units.

“Run” Approach to Customer Data Infrastructure

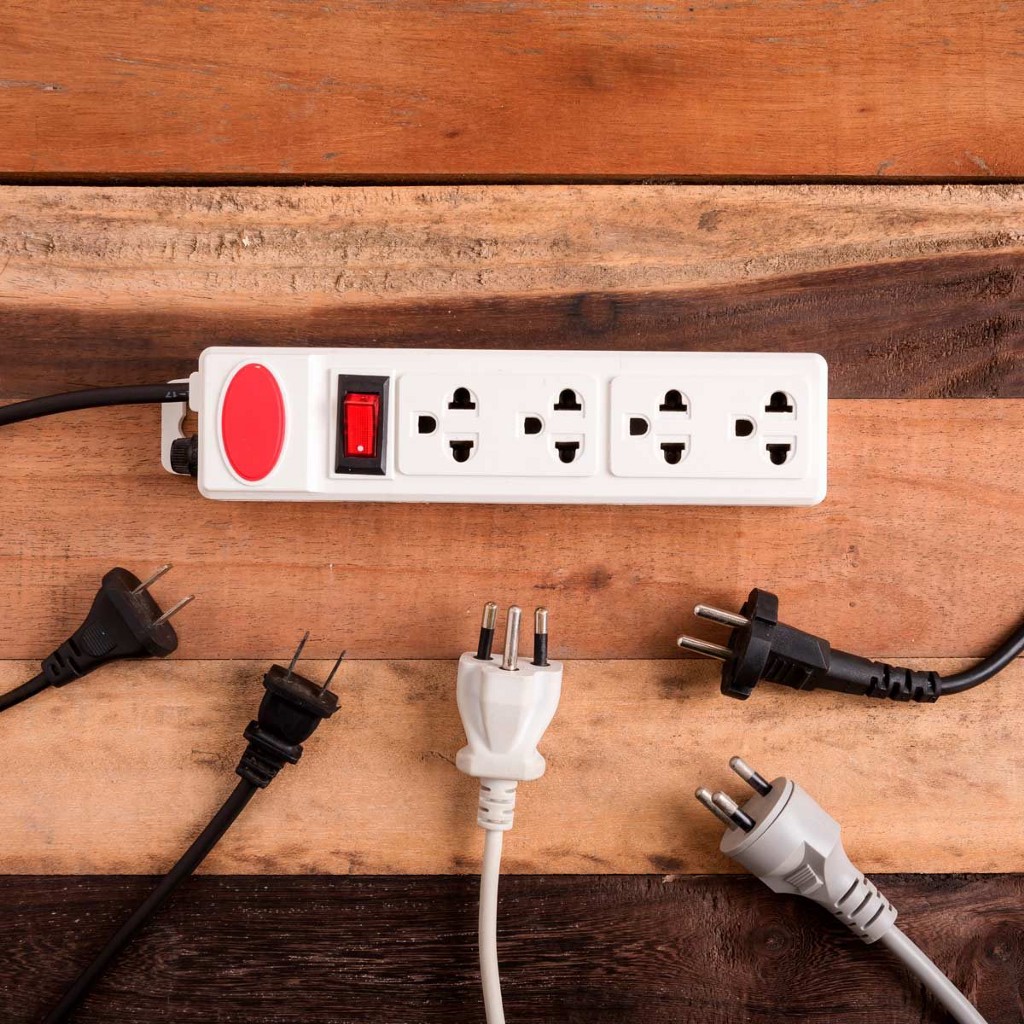

I speculate we will see a continued blurring of the lines between the Collection layer and the Storage layer; specifically an abstraction of certain data distribution and enrichment responsibilities from the Collection layer. This hypothesis is somewhat reinforced by the rise in popularity of reverse ETL solutions. If I continue with my plug and outlet analogy, I would liken the use of the Storage layer as a partial driver of data distribution to the Tesla Coil:

When collection and distribution can be successfully de-coupled for applicable use cases, there is no longer a need to reduce every data stream into the same data structure. Applicable data streams can be placed in their rich and unfiltered state directly into the Storage layer, enriched, then mapped to necessary digital properties in the Application layer if/when needed. In this way, you don’t pre-sacrifice the richness of data from each source. Similarly, you aren’t fire hosing data into each property in the Application layer unless it’s valuable and needed. When necessary, digital properties in the Application layer get a relative structure and specified sub-set of data; ultimately end business users can get the most out of their efforts in each of the end tools that they use. That said, some use cases may require speed in data delivery that this model may not fully support, and are better served by distribution in the Collection layer or enterprise grade streaming solutions like Kafka. This small shift in certain workloads of data distribution away from the Collection layer and into the Storage layer looks something like this:

I have seen the most success with this model, where the collection layer enforces a schema upfront and delivers data to the pieces of the Application layer that don’t need enriched or filtered data sets. Generally these are near realtime use cases (messaging, realtime analytics, APM, etc.) Other use cases need on demand and/or enriched subsets of data (generally web/app personalization, help desk, BI, etc.) This model, while effective, can expose businesses to runaway data warehouse costs and potentially messy data in the warehouse if too many business units are modifying/enriching data in the warehouse, but that’s a conversation for another day. As your business graduates to enterprise scale, streaming solutions (Kafka, etc.) emerge as viable options here.

Ultimately, each design pattern is dependent on where you are as a businesses, what resources you have and what you’re trying to achieve. Whether you are in the “walk,” “jog” or “run” stage of customer data infrastructure, Statsig can support your business. If you’re closer to the “walk” stage, we have SDKs that can sit on both the client or the server. If you are closer to the “jog” stage, we have integrations with Segment, mParticle and Heap. And if you are closer to the “run” stage we have integrations with Snowflake, Census, Fivetran and Webhooks.